EMC PowerPath/VE (Virtual Edition) for VMware vSphere

The purpose of this document is to describe the method for installing, configuring, and licensing PowerPath/VE on an ESX vSphere host system. For a quick reference of commands in sequential order, refer to page 9. The same methodology applies to ESXi.

PowerPath/VE (Virtual Edition) is used to manage the I/O and failover of storage devices on a VMware vSphere. PowerPath/VE allows all LUNs to be managed and owned by the PowerPath plug-in in place of VMware’s NMP (native multi-pathing plug-in). There are several benefits to using PowerPath/VE. Most importantly, full multi-pathing intelligence and utilization of all available paths vs. VMware’s Round Robin, MRU (most recently used) or Fixed Path algorithms. Performance characteristics of PowerPath/VE far exceed that of VMware NMP. Also, zoning ESX hosts on EMC storage is far easier than without having PowerPath/VE resident on the hosts.

VMware vSphere CLI and PowerPath/VE installation

PowerPath/VE is intended to be installed on both ESX and ESXi servers so all interaction with PowerPath/VE is via remote access. Never locally on the host ESX service console. This is where the vSphere CLI comes in handy.

The installation can be executed on Windows XP or Windows 7, 32 and 64 bit. Commands must be run from within the bin directory unless you define an environment variable for it. Refer to the install path post install for bin location.

Using the CLI, you can among many things, query the hosts with the following command: same output as old ESX service console command esxupdate query

1) Put the target ESX host into maintenance mode

2) vihostupdate.pl –query –server yourvspherehost

This command queries the host for all installed components, OS and patch version.

You will be prompted for credentials for each and every command. Use root.

End of Page 1

Page 2

The output will look similar to: Note PowerPath is not listed until it is installed.

Following these instructions are all that are required for a successful install.

PowerPath/VE installation command is: “replace the server name, install path and version where needed”. It’s best to copy this string into Notepad and then into the CLI to remove any formatting.

3) vihostupdate.pl –server yourvspherehost –install –bundle=\\your-unc-path\EMCPower.VMWARE.5.4.SP1.b033.zip

After this command executes (it takes approx. 2 minutes to complete), you will see the following:

DO NOT REBOOT at this point. If the host you are performing the install on is attached to an EMC Symmetrix or CLARiiON array, you must follow the procedures described on pages 5 thru 8 before rebooting. Claim rules can ONLY be modified on I/O active LUNs after PowerPath is installed and prior to the post install reboot.

Run the query command again to validate the install.

4) vihostupdate.pl –query –server yourvspherehost

The output will look similar to: Note PowerPath/VE is now listed.

End of Page 2

Page 3

5) Reboot

PowerPath/VE licensing and rpowermt remote administration

Licenses are installed and registered via the EMC rpowermt utility also referred to as RTOOLS. You can access the tool via a standard Microsoft Command Prompt. rpowermt is set in the server’s path, so commands can be executed from any context.

The EMC remote administration tool "rpowermt" is aware of this license file location because the following command was ran to set the path variable.

set PPMT_LIC_PATH=E:\PowerPathVE\Licenses

Once defined, the host can be licensed using the following command:

rpowermt host= yourvspherehost register

Once registered, verify by this command:

rpowermt host= yourvspherehost check_registration

The output will be similar to this:

PowerPath License Information:

——————————

Host ID : some ID hash here

Type : unserved (uncounted)

State : licensed

Days until expiration : (non-expiring)

License search path: E:\PowerPathVE\Licenses

License file(s): E:\PowerPathVE\Licenses\license.lic

E:\PowerPathVE\Licenses\ license.lic

End of Page 3

Page 4

E:\PowerPathVE\Licenses\ license.lic

E:\PowerPathVE\Licenses\ license.lic

E:\PowerPathVE\Licenses\ license.lic

E:\PowerPathVE\Licenses\ license.lic

End

The ESX or ESXi host should now have PowerPath/VE installed and is licensed in “unserved” mode. PowerPath/VE will be set as the owner of all Datastore LUNs.

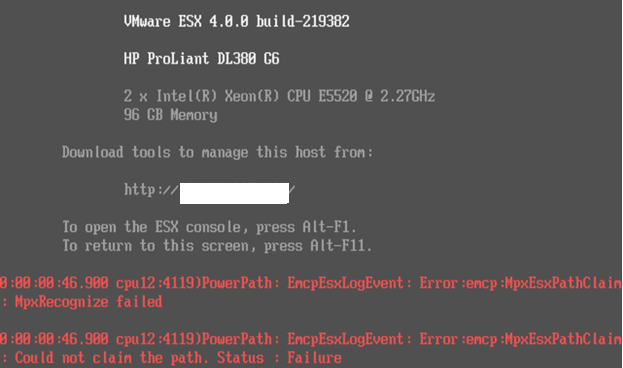

During installation on ESX hosts attached to EMC Symmetrix with LUNZ presented (C0:T0:L0), ESX claimrules must be altered to account for this. EMC CLARiiON attached hosts are not affected. LUNZ is also known as LUN Zero (0).

The presence of these devices causes several issues and errors on the ESX console. See error:

Use the vSphere CLI for executing commands and queries against an ESX 4.0 vSphere hosts.

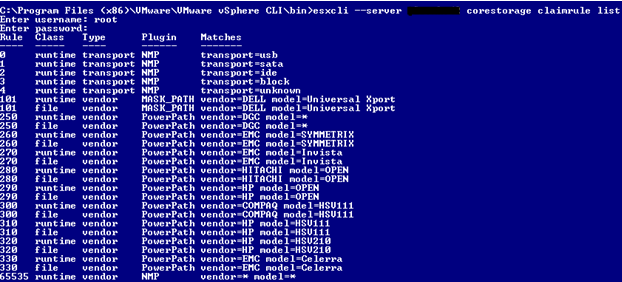

Below is a post PowerPath/VE installation corestorage claimrule definition. Note that PowerPath “owns” rules 250 and above after the installation.

esxcli –server yourvspherehost corestorage claimrule list

End of Page 4

Page 5

ESX 4 – vSphere now has a concept of a PSA – pluggable storage architecture. VMware corestorage claim rules control how the ESX/ESXi server utilizes storage presented to it. These claim rules are stored in the esx.conf file and is parsed during each reboot or rescan of an HBA device by the host.

Run the following commands in this order specific to an individual ESX/ESXi host. Replace the hostname in the examples below with the desired host name. Claim rule altering commands must be performed on each HBA device on the host that is attached to the SAN. Refer to HBA1 and HBA2.

1) esxcli –server yourvspherehost corestorage claimrule list

This command is to list the claim rules for review.

2) esxcli –server yourvspherehost corestorage claimrule add –plugin MASK_PATH –rule 102 –type location -A vmhba1 -C 0 -T 0 -L 0

This command creates a new claim rule “102” that masks LUNZ from the host on HBA1.

3) esxcli –server yourvspherehost corestorage claimrule add –plugin MASK_PATH –rule 103 –type location -A vmhba2 -C 0 -T 0 -L 0

End of Page 5

Page 6

This command creates a new claim rule “103” that masks LUNZ from the host on HBA2.

4) esxcli –server yourvspherehost corestorage claimrule list

List the claim rules again for review. Note that newly added rules 102 and 103 are only 50% built prior to a mandatory reboot to fully reload the host’s storage system. You will only see the file reference of the rule and not the runtime rule until a reboot occurs.

Before reboot output:

102 file location MASK_PATH adapter=vmhba1 channel=0 target=0 lun=0

103 file location MASK_PATH adapter=vmhba2 channel=0 target=0 lun=0

After reboot output:

102 runtime location MASK_PATH adapter=vmhba1 channel=0 target=0 lun=0

102 file location MASK_PATH adapter=vmhba1 channel=0 target=0 lun=0

103 runtime location MASK_PATH adapter=vmhba2 channel=0 target=0 lun=0

103 file location MASK_PATH adapter=vmhba2 channel=0 target=0 lun=0

5) esxcli –server yourvspherehost corestorage claimrule load

This command loads the new claim rules into memory.

6) esxcli –server yourvspherehost corestorage claimrule run

This command runs the new esx.conf corestorage claimrule definitions.

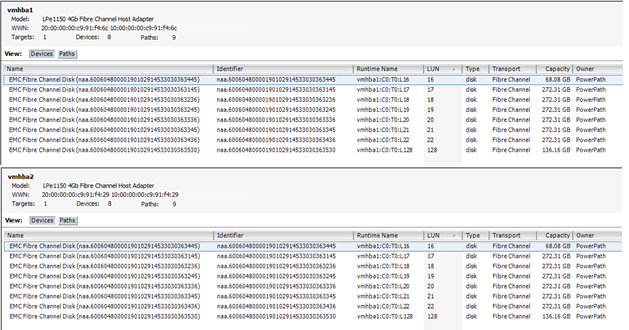

7) Reboot the ESX/ESXi host and verify that the desired LUN is masked by looking in vCenter > Configuration tab > Storage Adapters. Highlight vmhba1 and vmhba2 separately and verify that the LUN you wanted masked does not appear.

End of Page 6

Page 7

See before view with LUN 0 listed as a 2.81MB device owned by NMP.

After claim rules are set to mask the LUNZ devices on each host’s HBA following the instructions above. The console errors cease and the LUNZ device is no longer being presented to the host

If you skipped licensing, go back and complete the steps on pages 3 and 4 “PowerPath/VE licensing and rpowermt remote administration“.

End of Page 7

Page 8

PowerPath install commands quick reference

vihostupdate.pl –query –server yourvspherehost

vihostupdate.pl –server yourvspherehost–install –bundle=\\your-unc-path\EMCPower.VMWARE.5.4.SP1.b033.zip

vihostupdate.pl –query –server yourvspherehost

PowerPath licensing commands quick reference

set PPMT_LIC_PATH=E:\PowerPathVE\Licenses

rpowermt host= yourvspherehostregister

rpowermt host= yourvspherehostcheck_registration

ESX Claimrules quick reference

esxcli –server yourvspherehostcorestorage claimrule list

esxcli –server yourvspherehostcorestorage claimrule add –plugin MASK_PATH –rule 102 –type location -A vmhba1 -C 0 -T 0 -L 0

esxcli –server yourvspherehostcorestorage claimrule add –plugin MASK_PATH –rule 103 –type location -A vmhba2 -C 0 -T 0 -L 0

esxcli –server yourvspherehostcorestorage claimrule list

esxcli –server yourvspherehostcorestorage claimrule load

esxcli –server yourvspherehostcorestorage claimrule run

If deletion of a claimrule is required:

esxcli –server yourvspherehostcorestorage claimrule delete –rule ###

End of Page 8